Get Involved

There are many ways to get involved with biology and the Society's work. You can find us at the UK's biggest science festivals, enter our awards and competitions, apply for grants and funding, volunteer with us, join in or help organise events being run as part of Biology Week.

Biology Week

Biology Week will take place from 7th - 11th October 2024

To celebrate all aspects of the biosciences, we organise an annual Biology Week, with a range of events for everyone from children to professional scientists. We hope that many others will do the same.

Come and volunteer with us

Come and find us or volunteer with the Society at a range of science fairs and festivals around the country. For more information about volunteering at UK science festivals, contact our outreach team.

Awards

See our Awards page.

![]() The Society runs a range of awards for our members and other biology enthusiasts. These include our Outreach and Engagement Awards open to researchers who have brought good quality science to non-academic audiences in engaging ways, as well as our awards that recognise excellent school and higher-education teachers and technicians.

The Society runs a range of awards for our members and other biology enthusiasts. These include our Outreach and Engagement Awards open to researchers who have brought good quality science to non-academic audiences in engaging ways, as well as our awards that recognise excellent school and higher-education teachers and technicians.

Competitions

See our Competitions page.

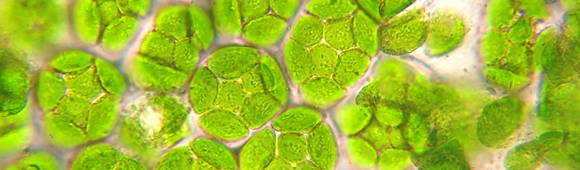

![]() Enter our annual photography competition which invites amateurs to submit photographs on a particular theme. Also, find out more about the competitions open to school students, which aim to challenge and motivate students with an interest in biology to expand and extend their talents.

Enter our annual photography competition which invites amateurs to submit photographs on a particular theme. Also, find out more about the competitions open to school students, which aim to challenge and motivate students with an interest in biology to expand and extend their talents.

Grants

See our Grants page.

The RSB offer a range of funding support for biologists. We offer grants for travel as well as outreach and engagement grants to support members to organise events in their area.

The RSB offer a range of funding support for biologists. We offer grants for travel as well as outreach and engagement grants to support members to organise events in their area.

Resources

We offer a range of information packs and resources to help you organise your own events and get more people learning about biology.

We offer a range of information packs and resources to help you organise your own events and get more people learning about biology.