Focus on: Image Manipulation

After nearly two decades of efforts to educate researchers and publishers, many papers still contain images that have been inappropriately edited

The Biologist 65(3) p30

In the early 2000s, Mike Rossner – then editor of the Journal of Cell Biology (JCB) – was checking images in a manuscript due for publication when he spotted something strange. Converting the image to a different file format revealed the tell-tale marks that a section had been cut out and pasted from another image. "I immediately knew this would be a huge problem," says Rossner.

After deciding to investigate, he found that around 1% of all papers accepted by the journal needed to be revoked due to suspect editing of images[1]. The issue was not just limited to a few researchers making blatantly fake images. Rossner found that up to 25% of accepted papers contained images that had been inappropriately edited in some way – not clear attempts to falsify results, but tweaks, cropping or adjustments that might be embellishing or enhancing visual data.

Modern photo-editing software, now available to any researcher with a computer or smartphone, has made adjusting images quick and easy. High-profile cases of image fraud continue to occur regularly in the biosciences[2] but aside from Rossner's studies on JCB papers (he found the number of papers showing signs of serious image fraud remained at almost exactly 1% for more than a decade, from 2002 to 2013), there is little qualitative data on how widespread the problem is.

The most common type of manipulated image is blots, says Rossner, who is now a specialist 'image detective' and image-fraud consultant. "Almost any image can be manipulated – I've seen it in electron micrographs, fluorescence microscopy, photographs of yeast colonies."

In recent years, some journals have joined the JCB in developing policies or employing technical editors[3] to screen images for signs of editing before publication, but it is still far from standard and some publishers only have the capacity to check a certain proportion of incoming images.

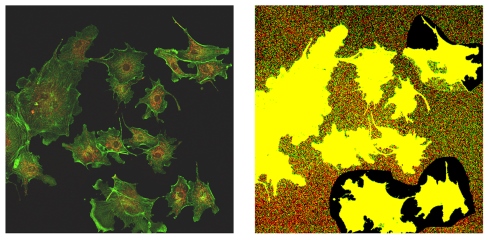

Adjusting the contrast of an image can reveal the tell tale signs of editing. Here, the higher contrast picture (right) reveals at least two sections where the background is different, suggesting these sections have been copied and pasted into the original image from elsewhere.

Adjusting the contrast of an image can reveal the tell tale signs of editing. Here, the higher contrast picture (right) reveals at least two sections where the background is different, suggesting these sections have been copied and pasted into the original image from elsewhere.Spotting signs of editing is not straightforward either. Software is available to help organise and check multiple manuscript images for signs of editing or plagiarism[4], but it still requires a person with expertise to make a judgement on each one. Some computer scientists and organisations are exploring tools to detect image manipulation automatically, including the US's Defense Advanced Research Projects Agency, which takes the view that fake media – including faked video and audio – is now a national security concern.

For now, in scientific publishing at least, the only fail-safe method is the somewhat laborious human analysis of images, one by one, by an expert eye. Despite the challenges of implementing this at every journal, it's clear the research community and publishing sector need to take further steps to ensure images are as robustly reviewed as other forms of research data.

• Adjustments to settings such as brightness or contrast should be performed equally across the whole image and in the same manner to the control data.

• The enhancement of particular colours or tones should be used to reveal a feature already present in the original data.

• No specific feature within an image should be introduced, removed, obscured or moved.

• Disclose details of any adjustments and software used in the figure legends or in the methodology.

• Take care when resizing images: enlarging an image can mean the computer creates pixels (and therefore data) not present in the original.

• Keeping good records of when and how your original data was obtained will help if manuscript images are flagged as suspicious.

• In general, images should be an accurate representation of what was observed experimentally. Adjustments to make an image clearer are acceptable, but beware of making weak data stronger..

What can journals and institutions do?

Set standards

• Journals should be clear on what type of image editing is unacceptable. The Journal of Cell Biology's author instructions[5] and its 2004 editorial on image manipulation[1] are a good place to start when developing guidelines or standards.

• Principal investigators or laboratory directors should make all research staff aware of image-manipulation guidelines and compare manuscript images to original data before submitting a paper to a journal.

Screen images

• Adjusting brightness, tone and contrast can reveal hidden layers or inconsistencies caused by edits.

• It is unrealistic to expect reviewers to check all images and figures for evidence of inappropriate editing, but it can be done by trained editorial staff on accepted papers prior to publication.

• Software can help organise large numbers of images for rapid screening by eye, but programs that automatically screen images are still in development.

• Some journals without the capacity to screen every image in-house do spot checks on random papers or employ companies to perform image forensics on submissions.

Investigate misconduct

• Investigating images prior to publication benefits all parties if adjustments are found to be inappropriate.

• If image manipulation is inappropriate, but not an attempt to falsify results, journal editors can ask to see the original, unedited images for comparison or ask them to submit new or remade images.

• The JCB revokes acceptance on any paper where (four editors agree) there is evidence that the paper's conclusions are called into question by image manipulation. They do not investigate intent.

• At the institutional level, research-integrity officers and investigative committees can seek an independent evaluation of allegations they receive.

1) Rossner, M. & Yamada, K.M. What's in a picture? The temptation of image manipulation. Journal of Cell Biology 166(1), 11–15 (2004).

2) Image Fraud articles via retractionwatch.com

3) 'Biology society hiring editors to screen images'. Retraction Watch, April 2017.

4) Forensic Tools, Office of Research Integrity.

5) Editorial policies – data integrity and plagiarism. Journal of Cell Biology, updated 29 March 2018.